Why the fuck did I write a Rust crate?

tl;dr summary

I wrote a Rust crate for Freebox OS because vulnerability research stopped being fun once curl and Python scripts became the weakest part of the investigation. The crate turned auth, tokens, TLS, typed endpoints, and raw probing into reliable primitives while I reported two vulnerabilities, kept digging into a third, and accidentally rediscovered an old Freebox easter egg.

table of contents

This whole thing started as a challenge I gave to myself: could I get something RCE-shaped on a Freebox, software-only?

It started after reading hardware-heavy router and terminal research, especially the Starlink work where Lennert Wouters used voltage fault injection against the user terminal. The public write-ups are ridiculous in the best way: WIRED covered the custom modchip and fault injection path, Ars Technica summarized the solder-heavy route to code execution, and the talk itself, Glitched on Earth by Humans, is exactly the kind of research that makes you look at consumer network hardware differently.

I loved the idea. Unfortunately, I suck at hardware.

So I looked at the box I already had: a Freebox, sitting in my living room, running a large amount of software, exposing a documented API, and carrying the strange historical weight of French ISP hardware that has been iterated on for a very long time.

The result was:

- a Rust crate for Freebox OS

- two vulnerabilities privately reported to the vendor (CVSS 8.5 (High) and 8.7 (High))

- a third bug that got me closer than expected to becoming root

- and, somehow, the rediscovery of an easter egg old enough to make the codebase feel like archaeology

No payloads, no vulnerable endpoints, no reproduction steps.

This is about the research loop, the tooling, and why the crate became the difference between poking at a router and doing something repeatable.

The starting point was not elegant

The first version of the research stack was exactly what you would expect:

- curl

- python + requests

- some JSON

- regrets

That is fine for a first look. It is even good for a first look. curl makes the shape of an API visible. Python makes iteration cheap. Burp-shaped workflows and little throwaway scripts are still how a lot of useful security research begins.

But Freebox OS has enough surface area that scripts started becoming the weakest part of the process almost immediately.

The problem was that every idea required doing the same boring setup correctly:

- discover the API version and base URL

- handle the login challenge

- compute the session password

- send the session request

- carry the session token

- refresh when the session expires

- remember which app token belongs to which local tool

- deal with TLS policy around Freebox hosts

- call a typed endpoint when possible

- drop to raw JSON when necessary

While tinkering is fun, finding actual bugs and vulnerabilities is more fun, so I had to drop this garbage.

Freebox OS has a very particular API shape

The Freebox OS API is not some tiny hidden debug port with three routes and a prayer. It is a real REST-ish API with authentication, permissions, system configuration, LAN state, DHCP, filesystem operations, downloads, Wi-Fi, TV/PVR, player control, storage, firewall, calls, notifications, VMs, domotics and a long tail of optional domains.

It also feels old in the way long-lived product APIs often feel old.

Not bad! Just.. old.

Some endpoints are clean. Some return shapes that need compatibility handling. Some domains are typed enough to deserve proper models. Some endpoints return configured credentials in cleartext. That surprised me for about five seconds, then I remembered this is a router admin API: if a caller can read that configuration, the same caller can usually change it too.

When an API has many domains, stateful auth, old compatibility layers, physical pairing, and privileged router configuration, the research problem becomes less about sending one clever request and more about moving quickly across the system without lying to yourself.

That is where the crate idea came from.

Physical approval changes the tooling problem

Freebox OS application authentication has a pairing flow. A tool asks for authorization, the actual, physical Freebox shows a prompt, and the user approves it physically.

Sending an HTTP request that requires a physical input is super cool, but it is also annoying if you are doing repeated local research (because I have to get out of my bedroom).

The crate therefore needed to make pairing a first-class flow:

- describe the application

- request authorization

- poll the authorization status

- persist the granted app token

- load the token later

- start authenticated sessions from that token

- refresh sessions automatically

- keep secrets out of debug output

This is the kind of work that feels over-engineered until the first time it saves an entire evening.

Once token storage and session refresh were reliable, I could stop thinking about authentication. The crate could open a session, attach X-Fbx-App-Auth, retry once on invalid session, and expose a stable Freebox handle.

let client = Freebox::client_for(&base_url, TlsPolicy::Auto)?;

let token_store = TokenStore::default_for(&app)?;

let token = match token_store.load(&app)? {

TokenLoad::Valid(token) => token,

TokenLoad::Missing | TokenLoad::NeedsAuthorization => {

authorize_app(&client, &base_url, &app, &token_store, config, |track_id| {

eprintln!("approve this app on the Freebox display: {track_id}");

})

.await?

}

};

let freebox = Freebox::from_app_token(client, base_url, app.app_id, token.app_token);

let config = freebox.system().config().await?;Why Rust?

The honest answer is simple: I wanted to learn Rust.

There are better marketing answers. Rust has good type modeling, strong error handling, no garbage collector, nice async tooling, and a culture that encourages explicit API boundaries. All of that is true.

But the actual reason was that I wanted a real project where Rust was useful without being performative.

This was a good fit. The crate needed to model a network API, handle secrets carefully, provide ergonomic builders, keep raw escape hatches, and avoid turning every endpoint into a pile of serde_json::Value. It needed enough structure to be useful and enough flexibility to survive a router API that was not designed around my Rust type preferences.

That tension is productive.

If everything is raw JSON, the crate is just a fancy requests.Session.

If everything is modeled too aggressively, the crate becomes brittle and every undocumented field becomes a crisis.

The compromise I ended up liking is:

| Layer | Design |

|---|---|

| auth | typed and strict |

| token storage | typed and careful |

| transport | shared, authenticated, refresh-aware |

| stable domains | typed structs and builders |

| uncertain domains | raw JSON escape hatches (unfortunately, soon to be deprecated though) |

| unsupported endpoints | generic get, post, put, delete, raw_response |

That made the crate useful for normal automation and useful for security research.

What the crate actually gave me

When testing a hypothesis, I could quickly:

- authenticate cleanly

- enumerate a domain

- inspect the raw shape

- promote stable parts into typed models

- mutate configuration through builders

- compare behavior before and after a state change

- chain one observation into the next request

- keep the same token/session behavior across every test

- fuzz around an endpoint without rewriting setup

That last point matters.

Fuzzing an API manually is not just random strings and chaos. Often it is structured exploration: vary one field, hold the rest stable, observe error classes, pivot to a nearby endpoint, compare authenticated and unauthenticated behavior, watch which objects accept partial updates, and keep enough logs to reconstruct what happened.

The difference between “I should test this later” and “I can test this in thirty seconds” is enormous. Security research rewards that kind of low-friction movement because most ideas are wrong, and the faster you can kill wrong ideas, the more time you have for the weird ones (and god did I have weird ideas, like, physically shaking my Freebox for example).

The research loop

The actual loop looked roughly like this:

- Start with a hypothesis.

- Send a small probe.

- Observe the API behavior.

- Decide whether the behavior is interesting.

- Discard boring branches quickly.

- Stabilize promising requests in the crate.

- Explore nearby variants.

- Try to chain behavior across domains.

- Validate impact safely.

- Write a private report.

The important word is “safely.”

This was my own device, in my own environment, against a system I was allowed to test (I hope so at least). Even then, the goal was not to brick the box or break my WAN. I just needed to understand impact, reduce uncertainty, and produce reports that a vendor can triage without needing a dramatic story around them.

The crate helped because it made the probes reproducible. A vulnerability report is much stronger when the researcher can say what state was required, what API behavior was observed, and what impact follows, without mixing that up with “also my script was a bit cursed.”

What I found (without disclosing it)

I privately reported two vulnerabilities.

At the time of writing, they are still in triage and I am not going to publish technical details. They were tested against the then-current Freebox OS release, 4.10.1.

The first two issues can, at a high level, lead to taking control of the admin account.

My self-assessed CVSS 3.1 scores are:

| Finding | Status | Self-assessed score | High-level impact |

|---|---|---|---|

| Vulnerability 1 | reported, triage ongoing | 8.5 | 1-click account takeover on LAN |

| Vulnerability 2 | reported, triage ongoing | 8.7 | 1-click account takeover |

| Vulnerability 3 | not reported yet, still investigating | ? | got me unusually close to root privileges |

The third vulnerability made me rediscover an old easter egg that I’m pretty sure had been forgotten since.

I have not reported that one yet because I want to investigate it more. The point is to understand whether this path can become an RCE, because RCE would let me explore the system much more efficiently and find more issues with a better view of the actual runtime.

The easter egg problem

Old consumer devices are weird because code survives.

Jokes, comments, assumptions, half-removed features, compatibility layers, internal names, test hooks, behaviors designed for a product generation that is no longer the one in your living room (and in anyone’s living room in fact).

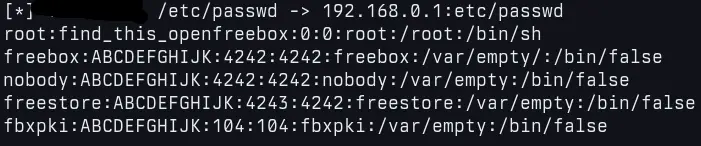

Freebox history has a lot of that energy. A not-so-old Univers Freebox article about Frédéric Hoguin’s old Freebox HD work describes a 13-year-old story involving Doom, root access, and developer easter eggs. The article mentions the Freebox HD default user id 4242 (and, to this day, 20 years later it seems like it’s still 4242), a reference to The Hitchhiker’s Guide to the Galaxy, and a root password string containing find_this_openfreebox as an explicit nod to the OpenFreebox community: Après 13 ans, une faille insolite et ultra pratique sur une ancienne Freebox dévoilée mais….

Consumer network equipment has long memory. Features get replaced, but assumptions stay. Services move, but conventions remain. An easter egg can outlive the engineers who wrote it, the threat model around it, and sometimes the product generation it belonged to.

Rediscovering something like that while doing modern security research feels like opening a wall in your apartment and finding a message from a previous tenant.

screenshot of me, reading

screenshot of me, reading /etc/passwd remotely, by exploiting my fancy third vulnerability

The crate is still meant to be useful

Even though the motivation was security research, I do not see the crate as disposable research scaffolding.

It is meant to be a real Freebox OS client:

- async

- reusable

- token-aware

- TLS-aware

- typed where useful

- raw where necessary

- usable for normal automation

- usable for research

That dual identity is important. Security tooling gets better when it is built like software instead of a one-off stunt. Normal automation tooling gets better when it is written by someone who has spent time asking how it breaks.

The crate does not need exploit code to be security-relevant!

It gives researchers and automation code a stable way to interact with Freebox OS. That is enough.

The repository is public here: github.com/ggmolly/freebox-rs.

The 3 day weekend timeline

The compressed timeline is almost stupid:

| Day | What happened |

|---|---|

| Friday (08/05) | first high-severity issue found and reported |

| Saturday (09/05) | second high-severity issue found and reported |

| Sunday (10/05) | crate finalized and published |

The first commits were older than that. The idea had been around for longer. But the intense part happened quickly because the tooling had finally become good enough.

By Sunday, publishing the crate felt less like a separate project and more like cleaning up the instrument I had been using all weekend.

Responsible disclosure means this post is incomplete on purpose

There are details I am not including:

- no affected endpoints

- no payloads

- no step-by-step reproduction

- no vulnerable service names

- no screenshots of sensitive states

- no code that demonstrates impact

- no hints that turn this into a scavenger hunt

The reports are private. Triage is ongoing. The vendor needs time to reproduce, assess, fix, and coordinate. Until then, the useful public story is the research process, the tooling, and the constraints around responsible disclosure.

I like technical write-ups. I like full disclosures when the time is right (unlike copyfail & dirtyfrag :^)). I like when a report eventually becomes a clean postmortem with enough detail to teach something real.

So, why the fuck did I write a Rust crate?

Because curl got me curious, Python got me moving, and then both became too annoying for the kind of research I wanted to do.

Freebox OS has a large enough API surface that the boring parts needed to be solid before the weird parts could become visible and I wanted to learn Rust on something real.

Oh and also because after one weekend, two private reports, one still-unreported rabbit hole, and one decade-old easter egg, the crate stopped feeling like side tooling and started feeling like the only sane way to keep going!